How to Choose Between RAG, Fine-Tuning, or Hybrid Approaches

This article explains the differences between RAG, fine-tuning, and hybrid AI architectures, helping business leaders choose the right approach based on data dynamics, behavioral requirements, and time-to-market constraints. It provides a practical framework for aligning AI architecture decisions with scalability, compliance, and long-term business value.

Artificial intelligence is no longer an innovation experiment tucked away in an R&D roadmap. For many organizations, it’s becoming operational infrastructure — influencing customer experience, internal productivity, compliance processes, and even revenue generation.

As generative AI initiatives move from pilot to production, leadership teams inevitably face a strategic decision: RAG vs fine-tuning — which approach should power our AI systems?

On the surface, the choice may seem technical. In reality, it’s architectural and business-critical.

Selecting between retrieval augmented generation vs fine-tuning affects:

- How quickly you can deploy

- How reliably the system performs

- How often it needs maintenance

- How transparent and compliant it will be

- And ultimately, how scalable your AI investment becomes

The discussion around rag vs fine-tuning is often oversimplified. RAG is labeled as “cheaper and faster.” Fine-tuning is described as “more powerful.” Neither statement tells the full story. What matters is context — your data maturity, regulatory landscape, operational complexity, and long-term AI vision.

For C-level leaders, the real question isn’t which technology is more advanced. It’s when to use RAG vs fine tuning, and when a hybrid model delivers stronger strategic advantage.

This article breaks down rag vs fine tuning vs prompt engineering, clarifies when to use each approach, and outlines a practical decision framework to help you invest in the right architecture from day one.

Unsure whether RAG, fine-tuning, or hybrid fits your use case?

Talk to BotsCrew’s AI experts and get a clear architectural recommendation.

The Business Context: Why This Decision Matters

The cost of choosing the wrong approach goes far beyond the initial budget overrun. It cascades through your organization in ways that aren't immediately obvious on a project proposal.

Choosing between RAG vs fine-tuning is not a matter of technical preference. It directly shapes how sustainable, governable, and profitable your AI initiative becomes over time.

When the wrong approach is selected, the consequences typically show up in five areas:

1. Time-to-Value Erosion

Fine-tuning when RAG would work means watching your competitors ship while you're still in the training phase. RAG when you need fine-tuning means iterations, patches, and eventually starting over—easily adding six months to your timeline.

2. Escalating Infrastructure Costs

An architecture that looks efficient in a pilot can become expensive at scale. Fine-tuning too early may lock you into repeated retraining cycles. RAG implemented without proper data structuring can drive high retrieval and embedding costs. What begins as a proof of concept turns into a budget discussion.

Also, initial estimates rarely account for the wrong approach. That $100K RAG project becomes $300K when you discover you need fine-tuning after all. That fine-tuning initiative doubles when you realize you still need retrieval capabilities for current information.

3. Adoption failure

The most expensive outcome isn't money—it's building something nobody uses. AI that can't maintain your brand voice frustrates marketing teams. Systems that cite outdated information lose trust with compliance officers. Inconsistent outputs make sales teams revert to manual processes.

4. Technical debt accumulation

Pivoting from one approach to another mid-project doesn't mean starting fresh with lessons learned. It means maintaining the old system while building the new one, migrating users, and managing the transition. Organizations often underestimate this complexity by 3-5x.

5. Governance and Compliance Risks

For regulated industries, explainability and data traceability are not optional. The difference between retrieval augmented generation vs fine tuning can determine whether you can clearly reference information sources or justify how an output was generated. Architecture influences audit readiness.

The Strategic Question Nobody's Asking

Here's where most organizations stumble: they start with "What AI technology should we use?" when they should start with "What business problem are we actually solving?"

Not: "Should we fine-tune or use RAG?"

But: "Do we need the AI to know facts or to behave a certain way?"

Not: "Which approach is more advanced?"

But: "Which approach delivers ROI fastest given our constraints?"

Not: "What are other companies doing?"

But: "What's the minimum viable solution that proves value to our stakeholders?"

This reframing matters because RAG and fine-tuning solve fundamentally different problems. RAG excels at making information accessible and current. Fine-tuning excels at encoding behaviors, styles, and specialized reasoning patterns. Confusing these purposes is like asking whether you need a database or an API—the question reveals a category error in thinking.

The companies that succeed with AI aren't necessarily the ones with the most sophisticated architectures. They're the ones that match their approach to their actual business need, ship quickly, prove value, and iterate from there.

The difference between a $100K experiment and a scalable AI system often comes down to architectural decisions made in the first 30 days. If you want to avoid rebuilding six months from now, start with the right foundation.

Book a consultation with BotsCrew and align your AI architecture with measurable business outcomes.

What Is RAG (Retrieval-Augmented Generation)?

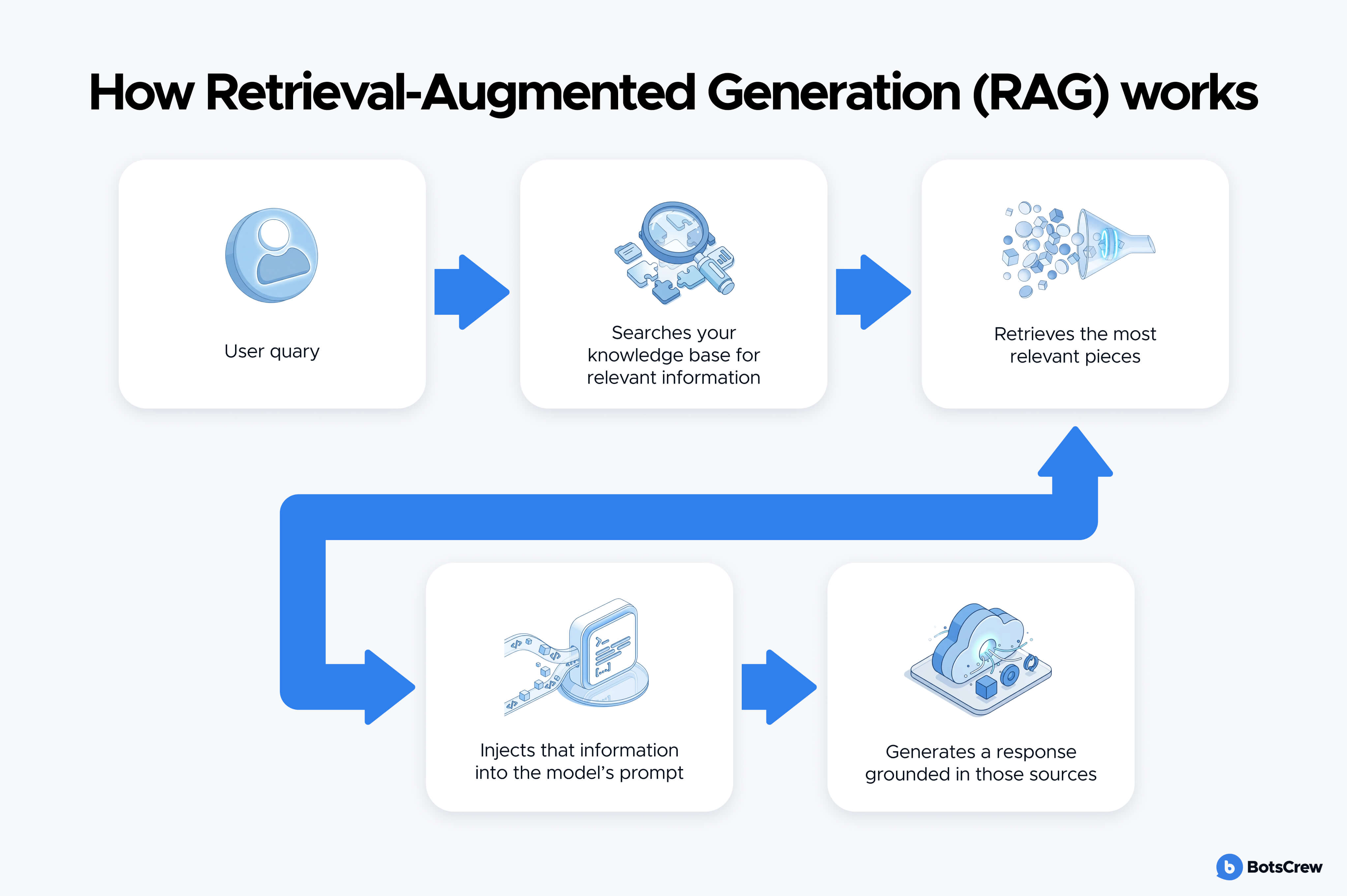

Retrieval-Augmented Generation (RAG) connects a large language model (LLM) to an external knowledge source — such as internal documents, product manuals, policies, databases, or CRM records.

Instead of relying solely on what the model learned during pretraining, the system:

- Searches your knowledge base for relevant information

- Retrieves the most relevant pieces

- Injects that information into the model’s prompt

- Generates a response grounded in those sources

The AI model itself remains unchanged. You're not teaching it new information—you're giving it access to information on demand.

It's analogous to how a well-prepared consultant works: they don't memorize every detail about your company, but they know exactly where to find information and how to synthesize it into useful answers.

Why Companies Choose RAG

For many organizations evaluating retrieval augmented generation vs fine tuning, RAG offers three clear advantages:

- Up-to-date information without retraining the model

- Greater transparency, as responses can reference source documents

- Faster deployment, especially for knowledge-heavy use cases

This makes RAG particularly effective for:

- Customer support assistants

- Internal knowledge copilots

- Compliance and policy Q&A

- Product documentation search

When the main challenge is access to accurate, frequently updated information, RAG is often the right starting point.

Where RAG Has Limits

RAG does not fundamentally change how a model thinks — it only improves what it knows at the moment of answering. If your use case requires highly specialized reasoning, strict formatting consistency, or deep domain adaptation, retrieval alone may not be enough.

Also, the quality ceiling is determined by your retrieval accuracy. If the system retrieves the wrong documents, the AI generates answers based on irrelevant information. This means RAG performance depends heavily on how well you've organized and structured your knowledge base.

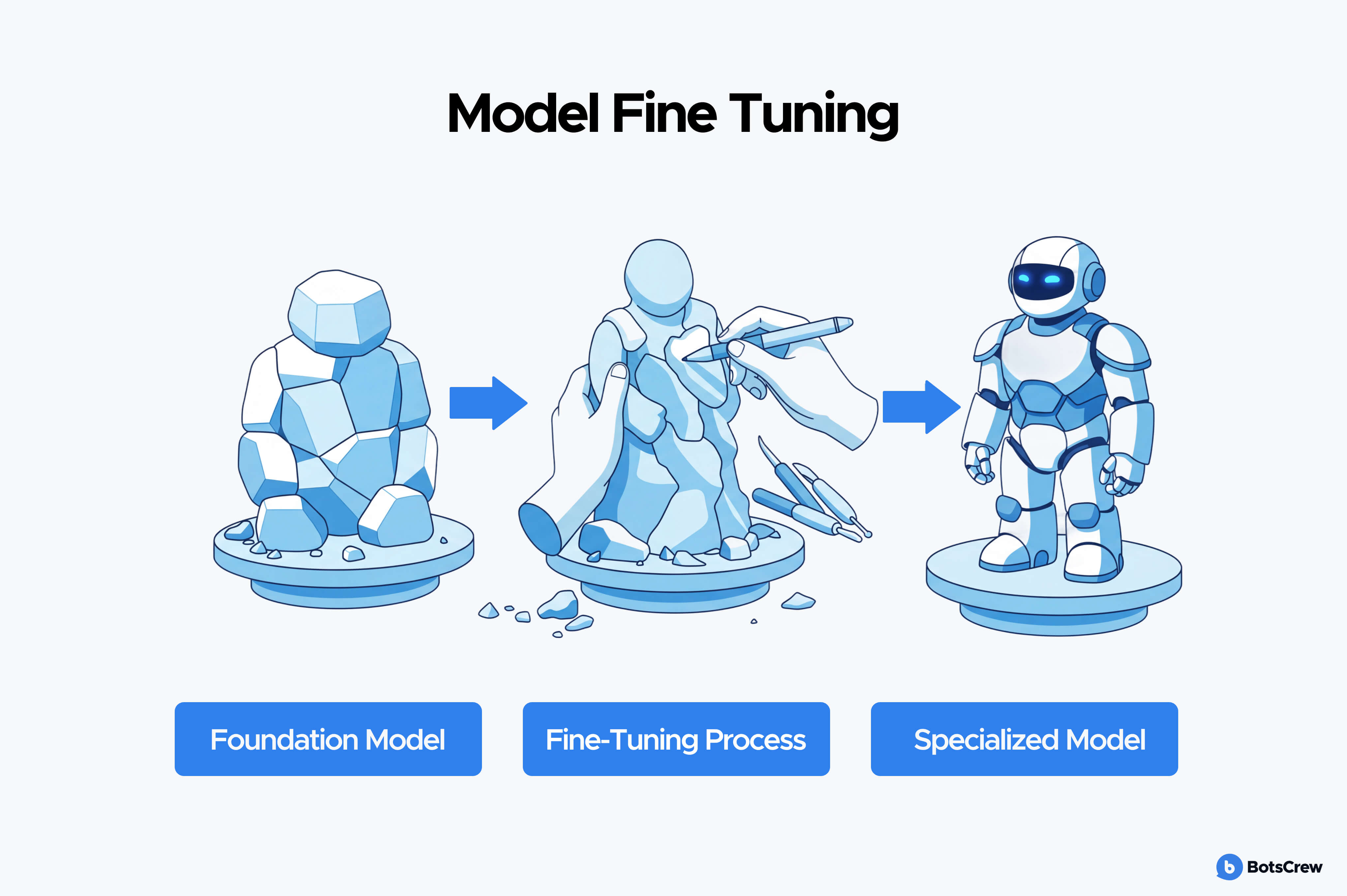

What Is Fine-Tuning?

Fine-tuning is teaching the AI to think, respond, and behave like your organization. You're taking a general-purpose AI model and continuing its training on your specific data, adjusting the model's internal parameters so it naturally produces outputs that match your needs—whether that's your writing style, your domain expertise, or your specific way of solving problems.

Think of it as the difference between hiring a consultant (RAG) who researches your company versus hiring someone who worked in your industry for ten years (fine-tuning). The latter has internalized the patterns, vocabulary, and reasoning approaches that your domain requires.

During fine-tuning, the model is trained on curated examples that reflect how you want it to respond. This could include:

- Domain-specific terminology

- Legal or medical reasoning structures

- Brand voice and tone

- Strict output formatting

- Industry-specific workflows

Over time, the model becomes more aligned with your use case — not just more informed, but more specialized.

Why Organizations Choose Fine-Tuning

In discussions around when to use fine-tuning vs rag, fine-tuning becomes relevant when precision and consistency matter more than dynamic knowledge updates.

It is particularly effective for:

- Highly specialized industries

- Structured document generation (contracts, reports, medical coding)

- Consistent brand communication at scale

- High-volume, repeatable tasks

Once deployed, fine-tuned models can offer lower latency and more predictable outputs because they don’t rely on external retrieval steps.

Read more: How to train an LLM using fine-tuning? Best practices for businesses

Where Fine-Tuning Introduces Complexity

Fine-tuning comes with trade-offs:

- Higher upfront investment

- Requirement for high-quality, labeled training data

- Ongoing retraining when business requirements change

- Greater governance and version control complexity

Updates require retraining cycles. When your processes change or you want to incorporate new patterns, you need to retrain the model. This isn't as simple as updating a document—it requires preparing new training data, running training jobs (which can take hours to days), evaluating the results, and deploying the updated model. Plan for this operational overhead.

You need substantial, high-quality training data. Effective fine-tuning typically requires hundreds to thousands of examples, depending on your use case. These examples need to be representative of the task, properly formatted, and ideally curated for quality. Creating this dataset is often the most time-consuming part of the process.

The model becomes specialized, sometimes at the cost of general capability. A model fine-tuned extensively on legal contracts might become exceptional at legal language but less capable at casual conversation. This specialization is often exactly what you want, but it's a tradeoff to consider.

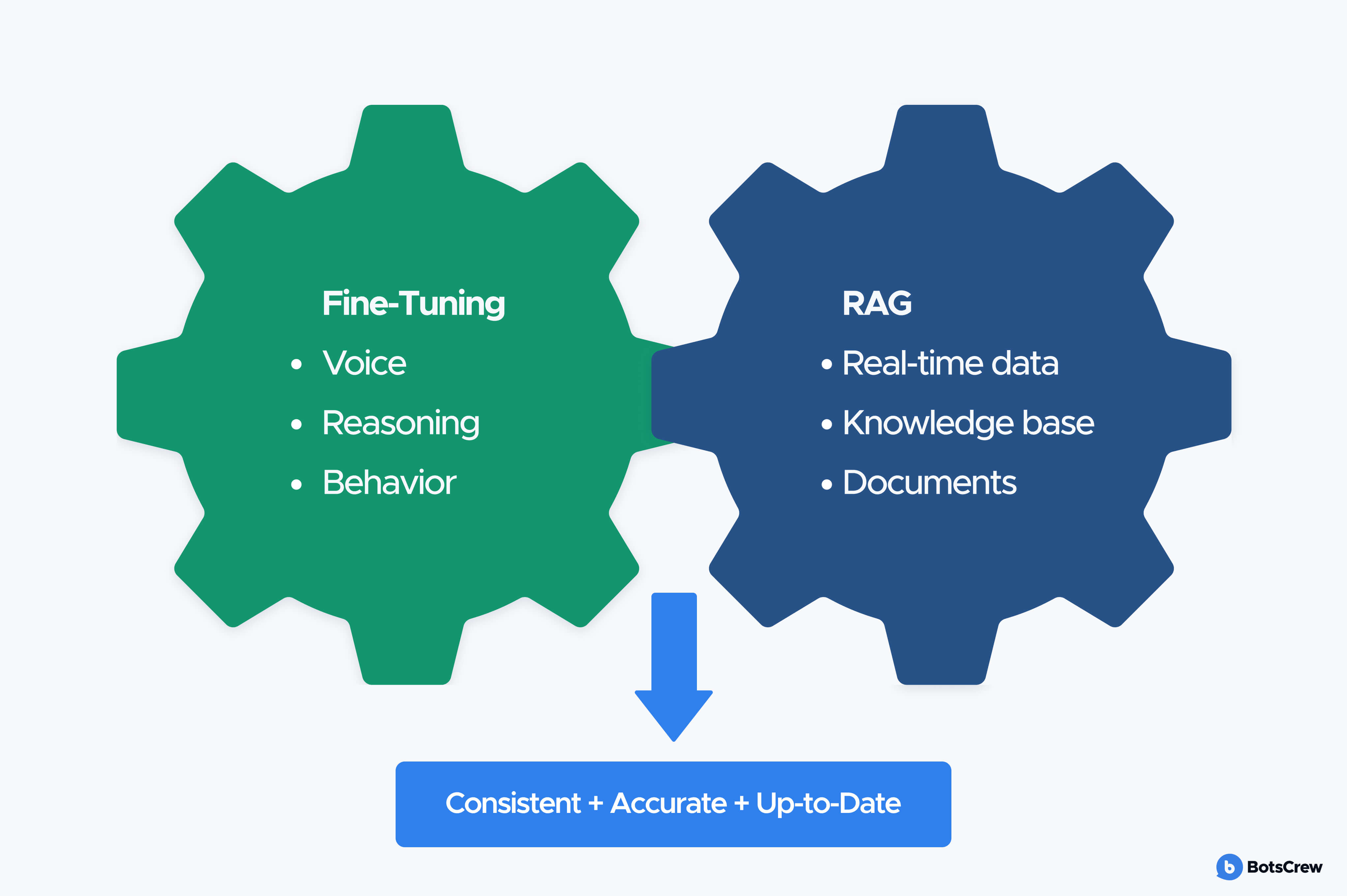

The Hybrid Approach: When the Answer Is “Both”

In many enterprise scenarios, the discussion around rag vs fine-tuning leads to a false binary. In practice, the most robust AI systems often combine both.

RAG and fine-tuning solve different problems. One improves access to knowledge. The other improves behavioral precision. When a use case requires both dynamic information and domain-specific reasoning, a hybrid architecture becomes the logical choice.

A hybrid approach combines fine-tuning for consistent behavior with RAG for access to current information. You fine-tune a model to understand your domain, speak in your voice, and follow your methodologies—then you augment it with retrieval so it can access up-to-date facts, recent documents, and dynamic information.

This is like having that industry veteran who has also mastered your company's current knowledge management system. They bring deep expertise and consistent judgment, while also knowing exactly where to find the latest information.

In practice, this means you're running both systems. You fine-tune a model on examples that teach it your domain expertise, output formatting, reasoning patterns, or brand voice. Then you implement RAG on top of that fine-tuned model, giving it retrieval access to your knowledge base. When a query comes in, the system retrieves relevant documents and passes them to your fine-tuned model, which applies its learned behaviors while incorporating the retrieved information.

When Hybrid Makes Strategic Sense

Organizations evaluating rag vs fine tuning should consider hybrid approaches when:

- Knowledge changes frequently, but responses must follow strict domain logic

- Regulatory requirements demand both traceability and precision

- The AI solution is a long-term product, not a short-term automation

- The cost of errors is high

For example:

- A healthcare assistant may use RAG for updated clinical guidelines, while fine-tuning ensures medical reasoning patterns are consistent.

- A legal automation tool may retrieve case references dynamically, while a fine-tuned model maintains contract structure and risk logic.

- An enterprise sales copilot may access CRM data via RAG while being fine-tuned for industry-specific positioning.

Strategic Decision Framework for C-Level Leaders

The debate around rag vs fine-tuning when to use which should not start with model architecture. It should start with business intent.

Before allocating budget or assigning engineering resources, leadership teams should pressure-test the initiative against a structured set of questions. The answers typically make the architectural direction clear.

1. How Often Does Your Knowledge Change?

If your AI system must reflect:

- Frequently updated policies

- Product releases

- Pricing adjustments

- Regulatory changes

RAG is often the stronger foundation. It allows updates without retraining.

If your knowledge base is relatively stable but the reasoning patterns must be highly specialized, fine-tuning may be more appropriate.

This is often the first filter in deciding when to use RAG vs fine tuning.

2. Do You Need Knowledge Access or Behavioral Precision?

If your AI needs to know facts that change—product specifications, regulatory updates, market data, inventory levels—you're solving a knowledge problem. RAG is likely your answer.

If your AI needs to consistently perform a task in a specific way—maintain brand voice, follow a proprietary methodology, apply specialized reasoning—you're solving a behavior problem. Fine-tuning is likely your answer.

Most organizations can clearly identify which category they're in within five minutes of honest discussion. If you can't, you probably haven't defined your use case clearly enough to make any architectural decision yet.

3. What Is Your Time-to-Market Constraint?

Need results in 4-6 weeks to demonstrate ROI or respond to competitive pressure? RAG is your only realistic option. Fine-tuning requires 8-12 weeks minimum, often longer when you account for data preparation and iteration.

Fine-tuning requires:

- Curated datasets

- Training cycles

- Evaluation loops

- Governance processes

For organizations under competitive pressure, this timing difference is significant.

4. What Are Your Compliance and Explainability Requirements?

In regulated industries, traceability matters.

RAG-based systems can reference specific documents or sources, which simplifies audit processes. Fine-tuned models may deliver strong performance but often provide less transparent reasoning paths.

When evaluating retrieval augmented generation vs fine tuning, compliance is rarely a secondary consideration — it’s often decisive.

5. What Is Your Budget Structure: CapEx vs OpEx?

Fine-tuning often requires higher upfront investment in data preparation and training.

RAG may distribute costs more gradually across infrastructure and maintenance.

Understanding whether your organization prefers concentrated initial investment or scalable operational spending helps frame the decision.

AI success starts with the right foundation.

Partner with BotsCrew to evaluate your data, define success metrics, and choose the optimal architecture.

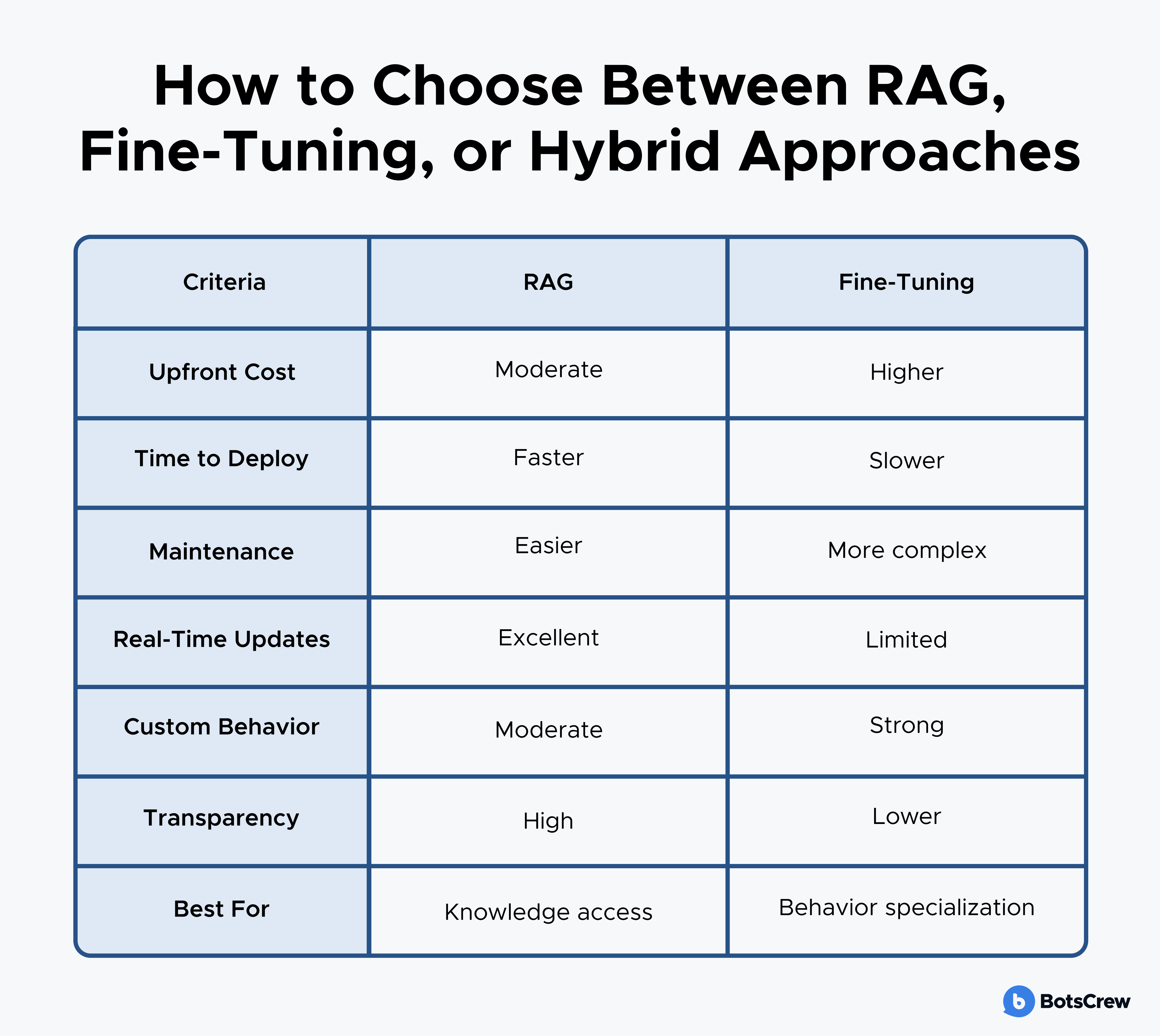

The Evaluation Matrix: Matching Approach to Business Reality

Use this framework to evaluate which approach aligns with your specific situation. Rate your organization honestly on each dimension.

Data Characteristics:

Update frequency:

- Daily/Weekly changes → Strong RAG signal

- Monthly updates → RAG or fine-tuning viable

- Quarterly or less → Fine-tuning viable

- Mix of stable and dynamic → Hybrid candidate

Source traceability requirements:

- Must cite sources for compliance → RAG required

- Citation builds user trust → RAG preferred

- Consistency matters more than attribution → Fine-tuning viable

Training data availability:

- Less than 500 quality examples → RAG only

- 500-2,000 examples → Fine-tuning possible but risky

- 2,000-10,000 examples → Fine-tuning viable

- 10,000+ diverse examples → Fine-tuning advantageous

Business Constraints:

Timeline pressure:

- Need MVP in 4-6 weeks → RAG

- Can invest 2-3 months → Fine-tuning possible

- Building 6+ month strategic initiative → Any approach viable

Budget reality (initial investment):

- Under $75K → RAG

- $75K-$200K → RAG or fine-tuning

- $200K-$500K → Fine-tuning or hybrid

- $500K+ → Hybrid approaches justified

Risk and Criticality:

Business impact of errors:

- Low (internal tool, experimental) → Start with RAG

- Medium (customer-facing, important) → Either approach with proper testing

- High (regulated, mission-critical) → Invest in thorough evaluation, likely hybrid

Risk tolerance:

- Testing the waters → RAG (lower commitment, faster validation)

- Validated use case → Fine-tuning or hybrid

- Strategic bet → Full evaluation warranted

Organizational Readiness:

Technical team capability:

- Strong engineering, limited ML → RAG

- ML expertise available → Fine-tuning viable

- Full AI/ML team → Any approach viable

- Plan to use external partners → Dependent on partner capability

Operational maturity:

- First AI implementation → RAG (simpler operations)

- Some AI in production → Fine-tuning manageable

- AI-native organization → Hybrid approaches viable

Red Flags That You're Not Ready to Decide

Sometimes the right answer is that you need to do more work before choosing an approach. Watch for these warning signs:

You can't articulate the success metric. If you don't know what "good enough" looks like quantitatively, you can't evaluate which approach gets you there. Define your threshold first.

Your team is debating technology, not outcomes. If conversations focus on "RAG is more modern" or "fine-tuning is more sophisticated" rather than "which solves our specific problem," you're not ready.

You don't know what data you actually have. Saying "we have lots of documents" isn't the same as knowing you have 50,000 well-structured product docs versus 5,000 emails and meeting notes. Audit your data first.

Stakeholders have contradictory requirements they haven't reconciled. If compliance needs citations while marketing needs consistent brand voice, and nobody's prioritized these needs, no architecture will satisfy everyone. Align on priorities first.

You're planning for scale you haven't validated. Building a hybrid system to handle millions of queries when you haven't proven hundreds of people will use it is premature optimization. Prove the value, then scale.

When you see these red flags, pause the architecture decision. Invest 2-4 weeks clarifying requirements, auditing data, and building alignment. This upfront work will save you months of building the wrong thing.

Making the Right Decision From Day One

The choice between rag vs fine-tuning when to use which is rarely about technical capability. It’s about business clarity, data maturity, and strategic alignment.

At BotsCrew, we work directly with CTOs, COOs, and AI leaders to:

- Audit data readiness

- Define measurable AI success metrics

- Evaluate RAG vs fine tuning vs hybrid architectures

- Design scalable, production-ready AI systems

- Align technical decisions with business outcomes

If you’re navigating this decision — or unsure whether you’re ready to make it — a structured architecture assessment can prevent costly missteps.

Book a consultation with BotsCrew’s AI experts and make your next AI investment with confidence.