How to Spot Red Flags in an AI Proposal Before It Costs You Millions

Learn to identify the warning signs in any AI business proposal that signal wasted investment. A practical guide for executives evaluating AI project proposals.

💡TL;DR — The Six-Flag Test for AI Proposals

The six most common red flags in an AI proposal are: (1) vague success metrics, (2) technology-first framing with no business problem anchor, (3) unrealistic timelines that ignore data preparation, (4) no clear ownership or change-management plan, (5) opacity around data, privacy, and compliance, and (6) overpromising on autonomy without human oversight.

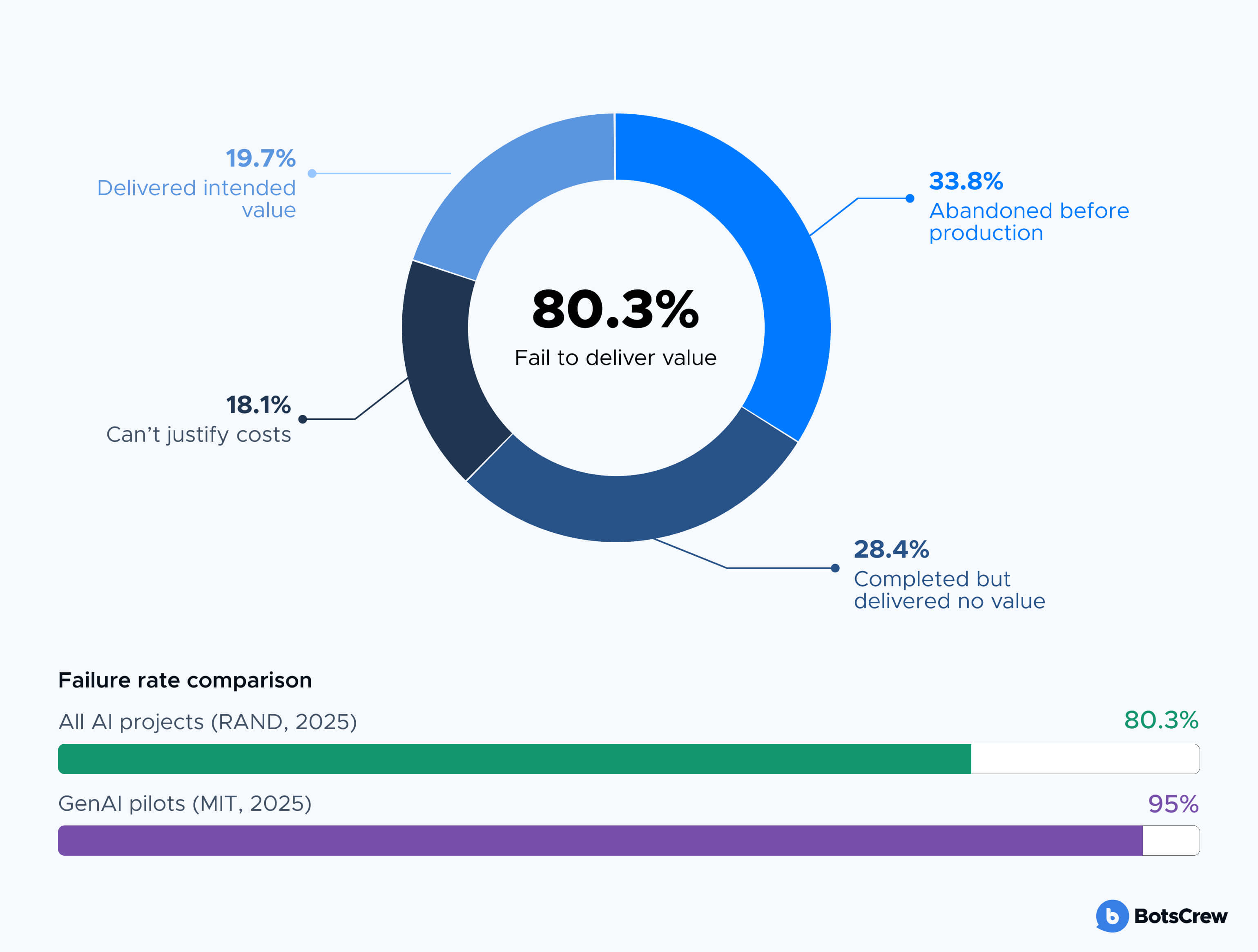

Most AI proposals look impressive with their polished decks, ambitious timelines, and confident claims about ROI. And yet, research from RAND Corporation shows that more than 80% of enterprise AI projects fail to deliver their intended business value.

The gap between what gets promised in an AI proposal and what actually gets delivered in production has become one of the most expensive blind spots in enterprise decision-making. In 2025 alone, S&P Global found that 42% of companies abandoned most of their AI initiatives, up from just 17% the year before. The average cost per abandoned project? $7.2 million.

Companies abandoning most AI initiatives +25pp

The problem rarely starts with bad technology. It starts with bad proposals — documents that sound convincing but contain structural weaknesses that experienced evaluators learn to recognise. This article breaks down the most common red flags in an AI business proposal, so you can filter out the noise before your organisation becomes another statistic.

Why AI Proposals Deserve a Different Level of Scrutiny

Evaluating an AI proposal is not the same as evaluating a standard technology procurement. Traditional software has well-understood implementation patterns. You know what an ERP rollout looks like. You know what a CRM migration entails. AI is different — and the proposals that land on your desk reflect that difference, for better or worse.

AI projects carry unique risks that standard vendor assessments often miss. According to a recent AI survey, 77% of AI project failures are organisational in nature — poor adoption, undefined ownership, or misaligned success criteria. Only 23% trace back to model performance or data quality issues. Yet most AI proposals focus almost entirely on the technology, burying or ignoring the organisational complexity that will determine whether the project succeeds.

of AI project failures are organisational

A request for proposal AI evaluation process needs to account for this reality. The questions you ask of an AI vendor should be fundamentally different from those you'd ask of someone selling a SaaS platform. You're not just buying software. You're buying a capability that requires your data, your workflows, and your people to function.

Red Flag #1: Vague or Missing Success Metrics

The single most reliable predictor of AI project failure is the absence of clearly defined success criteria before work begins. A recent research found that projects with metrics defined pre-approval had a 4.5x improvement in success rates compared to those without.

improvement in success rates when metrics are defined before project approval.

And yet, a striking number of AI project proposals arrive with goals that read more like marketing copy than measurable objectives. Watch for language such as:

- "Leverage AI to unlock operational efficiencies"

- "Transform the customer experience through intelligent automation"

- "Harness the power of AI to drive growth"

These phrases sound strategic but commit to nothing. A credible AI business proposal specifies what will be measured, how it will be measured, what the baseline is today, and what success looks like in concrete terms — a percentage reduction in processing time, a measurable improvement in accuracy, a quantifiable cost saving.

If a proposal cannot articulate the business problem it solves in a single, measurable sentence, it is not ready for approval. Ask the vendor or internal team to rewrite the proposal with specific AI metrics and KPIs before it advances to the next stage.

Red Flag #2: Technology-First Framing With No Business Problem Anchor

A well-constructed AI proposal starts with a business problem and works backward to the technology that solves it. A weak proposal does the opposite — it starts with a technology (large language models, computer vision, AI agents) and then searches for a justification.

This distinction matters enormously. MIT's 2025 research found that 95% of generative AI pilots failed to deliver measurable impact on the P&L. Among the successful 5%, the common thread was not better technology — it was tighter alignment between the AI solution and a specific, well-understood business workflow. Specialised, vendor-led projects that targeted specific back-office processes succeeded roughly 67% of the time, while broad internal builds succeeded only about 33%.

When reviewing an AI proposal or AI agent development proposal, ask: does this document spend more time describing the technology or describing the problem? If the ratio skews toward the technology, that's a warning sign. The best proposals dedicate the majority of their space to articulating the current state, the desired state, and the measurable gap between them.

Red Flag #3: Unrealistic Timelines and Understated Data Requirements

Speed is appealing. When an AI project proposal promises a working prototype in two days and full deployment in two weeks, it's tempting to sign off. But these timelines frequently ignore the single largest variable in any AI implementation: data readiness.

Gartner has predicted that 60% of AI projects unsupported by AI-ready data will be abandoned through 2026. Informatica's 2025 survey found that data quality and readiness (43%) and lack of technical maturity (43%) were the top obstacles to AI success.

of organisations cite data quality and readiness as the top obstacle to AI success.

A credible proposal accounts for the data work. It includes time for data audits, quality assessments, pipeline development, and governance. It budgets for the fact that organisations typically need to allocate 40-50% of total AI project resources to data preparation alone. If a proposal treats data as a minor line item or assumes your existing data infrastructure is "good enough," that's not optimism — it's a gap in due diligence.

Similarly, be cautious of proposals that gloss over integration complexity. An AI model that performs well in isolation but cannot integrate with your existing systems, compliance workflows, or operational processes has no business value. The proposal should explicitly address how the solution connects to your current technology stack and what changes are required on your side.

Red Flag #4: No Clear Ownership Model or Change Management Plan

Here's a pattern that appears in 41% of underperforming AI projects, according to a recent analysis: the project gets technically delivered, but nobody in the organisation actually owns it. There's no designated business owner, no adoption plan, no training programme, and no process for acting on the AI's outputs.

Any business proposal AI initiative puts forward should answer three organisational questions: Who owns this after delivery? Who will use it daily? And what changes to existing workflows are required for it to generate value?

Look for a dedicated section on change management. Look for a budget allocation — credible implementations typically dedicate 20-30% of the total budget to adoption, training, and organisational change. If that's missing, the proposal is optimising for delivery, not for value.

Red Flag #5: Opacity Around Data Usage, Privacy, and Compliance

As AI regulation accelerates globally — the EU AI Act's requirements begin phasing in from August 2026, and the US Office of Management and Budget has set procurement compliance obligations with a March 2026 deadline — any AI proposal that treats compliance as an afterthought is a liability.

Specifically, be wary of proposals that:

- Cannot clearly explain what data the AI system will use, where it comes from, and how it's governed

- Lack documentation on AI bias testing, model transparency, or explainability

- Are silent on data retention, sub-processor relationships, or third-party data sharing

- Cannot articulate how the system handles personally identifiable information

Procurement and legal experts consistently flag that vendors who are unable or unwilling to provide transparency around their models, training data, and decision-making processes should be treated as high-risk. A credible AI agent development proposal or any AI project proposal should proactively address these concerns, not wait for you to raise them.

The cost of getting this wrong extends beyond regulatory fines. Reputational damage, loss of customer trust, and legal exposure can far exceed the cost of the AI project itself.

Talk to our AI consulting team

If you're finding that most of the proposals on your desk have these gaps, the issue may run deeper than the vendors. An AI strategy consultation can help your team define what "good" looks like before the next RFP goes out.

Talk to our AI consulting teamRed Flag #6: Overpromising on Autonomy, Underpromising on Human Oversight

The current wave of enthusiasm around AI agents — autonomous systems that can take actions, make decisions, and execute workflows — has produced a new category of inflated proposal language. Gartner predicts that over 40% of agentic AI projects will be cancelled by the end of 2027.

Be cautious of any proposal that promises fully autonomous AI without a clear human-in-the-loop framework. The most successful enterprise AI implementations maintain human oversight at critical decision points, not because the technology can't function independently, but because organisational trust, accountability, and AI regulatory compliance require it.

A mature proposal acknowledges the limitations of AI explicitly. It defines the boundary between automated decisions and human-reviewed decisions. It includes escalation paths for edge cases. If a proposal reads as though AI will handle everything end-to-end with no human involvement, it's either naive or misleading — and neither is a foundation for a sound investment.

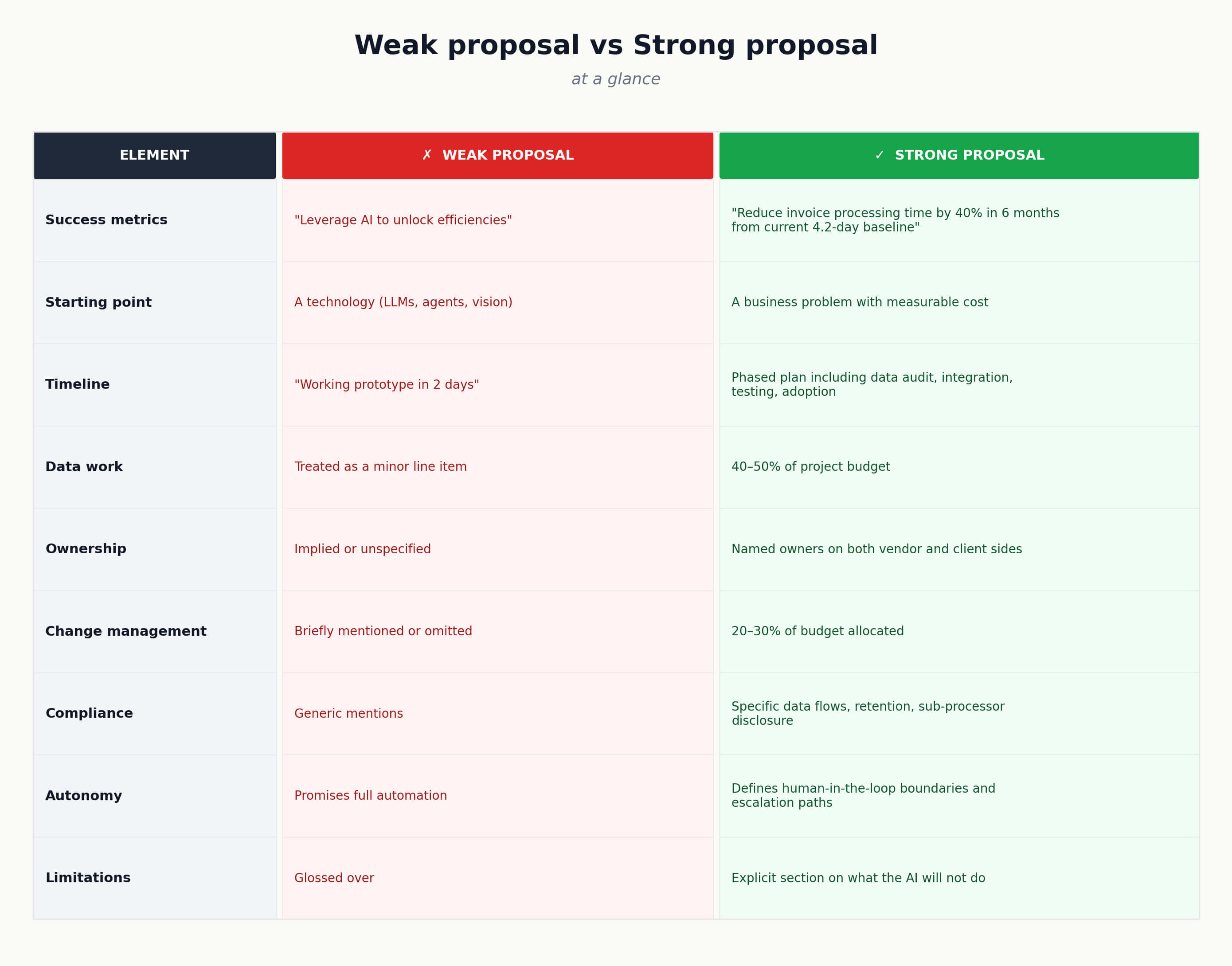

What a Strong AI Proposal Looks Like

Having identified what to avoid, it's worth outlining what a credible proposal includes:

A strong AI proposal includes nine elements:

- A clearly defined business problem with measurable success criteria

- A realistic data-readiness assessment and the gap-closing work required

- An honest timeline accounting for data preparation, integration, testing, and change management

- A defined ownership model with named stakeholders on both vendor and client sides

- A change management and adoption plan with dedicated budget (20–30% of total)

- Transparent documentation on data usage, model governance, compliance, and privacy

- A phased approach — starting with a defined AI pilot and clear criteria for scaling

- An explicit limitations section describing what the AI will not do, and what could go wrong

- A named owner for ROI measurement after deployment, not just at sign-off

The strongest proposals don't oversell. They demonstrate that the vendor or internal team understands the gap between building an AI system and generating business value from one — and they have a plan for closing that gap.

Frequently asked questions about AI proposals

What is an AI proposal?

An AI proposal is a structured document — usually from an external vendor or internal team — outlining how an artificial intelligence system will solve a defined business problem. It typically includes scope, timeline, data requirements, integration plan, success metrics, ownership model, compliance approach, and budget.

How is an AI proposal different from a standard software RFP?

A standard software RFP evaluates a known product against fixed requirements. An AI proposal must additionally address data readiness, model governance, change management, and human-in-the-loop oversight. The complexity is organisational as much as technical, which is why traditional RFP scoring rubrics tend to miss the highest-impact risks.

Who in the organisation should evaluate an AI proposal?

Effective evaluation requires three perspectives: a business owner accountable for outcomes, a technical reviewer who can assess data and integration claims, and a legal or compliance reviewer who can flag data-handling risks. A proposal reviewed only by IT or only by procurement consistently underperforms one reviewed by all three.

How long should an AI proposal evaluation take?

A thorough evaluation typically takes 2–4 weeks for an enterprise-scale initiative, including stakeholder interviews, data-readiness checks, vendor due diligence, and reference calls. Compressing this to days is a common false economy — the cost of a misjudged sign-off is multiples of the time saved.

What budget should I expect to allocate to change management in an AI project?

Credible implementations typically allocate 20–30% of total project budget to adoption, training, and organisational change. Proposals that allocate less are usually optimising for delivery rather than value realisation.

What is "AI-ready data" and why does it matter at the proposal stage?

AI-ready data is data that is sufficiently complete, accurate, governed, and accessible to be used by an AI model in production. Gartner predicts 60% of AI projects unsupported by AI-ready data will be abandoned through 2026. A proposal should treat data preparation as a core workstream, not an assumption.

Protect Your AI Investment at the Proposal Stage

The data is unambiguous: the majority of AI projects fail, and the roots of failure are almost always visible at the proposal stage. Vague success criteria, technology-first thinking, unrealistic timelines, missing ownership models, and compliance gaps don't become less problematic after a contract is signed — they become more expensive.

The executives who generate real returns from AI aren't the ones who approve the most ambitious proposals. They're the ones who ask the hardest questions before a single line of code is written.

If your organisation is evaluating AI proposals and wants an independent perspective on whether they hold up to scrutiny, that's a conversation worth having. A structured review of your AI business proposal pipeline — before commitments are made — is one of the highest-leverage investments a leadership team can make right now.

Book a free AI strategy consultation

Whether you're defining your AI strategy, evaluating vendors, or trying to figure out where AI fits in your organisation, our consulting team works with enterprise leaders to cut through the noise and build AI initiatives that actually deliver. No pitch decks, no hype — just practical guidance grounded in what the data says works.

Book a free AI strategy consultation