Human-in-the-Loop AI: When Automation Needs Human Oversight

As AI moves deeper into high-stakes decisions — credit approvals, clinical diagnoses, autonomous agents — the question is no longer whether to automate, but where human judgment must be guaranteed. This article breaks down what effective human-in-the-loop AI actually looks like in practice, how it differs from checkbox oversight, and how to design it for your organisation's risk profile.

In November 2021, Zillow shut down its algorithmic home-buying business, Zillow Offers, after its AI-driven pricing models systematically overpaid for properties in a shifting market. The result: more than $500 million in write-downs, 2,000 jobs eliminated, and a stock price that lost nearly half its value in days. CEO Rich Barton acknowledged the core failure directly: the company's algorithms could not accurately predict home price fluctuations at the scale required.

What makes the Zillow case instructive isn't the technology failure itself. It's what was missing around it. The pricing models operated with minimal human scrutiny over individual valuations, even as the company scaled purchases into the billions. There was no structured mechanism for experienced real estate professionals to challenge or override the algorithm's pricing on a deal-by-deal basis. The system automated decisions that carried enormous financial exposure — without embedding the human judgment needed to catch what the model couldn't see.

It remains one of the most expensive illustrations of a principle now shaping enterprise AI strategy across every industry: the organisations delivering sustained value from AI aren't the ones automating the most. They're the ones that know precisely where to keep a human in the loop.

Why Human Oversight in AI Systems Has Become a Board-Level Issue

The pressure to embed human-in-the-loop AI workflows isn't coming from engineers. It's coming from regulators, customers, and the C-suite itself.

On the regulatory front, the EU AI Act requires that high-risk AI systems include qualified human oversight, documented decision logs, and the ability to explain outcomes.

But regulation is only half the story. The commercial case is equally compelling. Research from Verizon's 2025 CX Insights Report found a 28% gap in customer satisfaction between AI-driven interactions (60%) and those led by humans (88%). That gap doesn't mean AI fails — it means AI without well-designed human escalation paths fails. In addition, 47% of consumers cite the inability to access a human agent as their main source of frustration with automated interactions. Enterprises that close this gap through thoughtful human-in-the-loop design protect both revenue and reputation.

And then there's the sobering internal data. According to one widely cited analysis, 42% of companies abandoned most of their AI initiatives in 2025, up from 17% in 2024. The common thread wasn't bad technology. It was absent governance — no oversight framework, no escalation protocols, and no clear accountability when outputs went wrong.

Companies abandoning most AI initiatives +25pp

For senior leaders, the conclusion is clear: human oversight in AI systems is no longer an operational nice-to-have. It's a prerequisite for scaling AI safely and sustainably.

Not sure where human oversight fits in your AI strategy?

BotsCrew's AI Strategy Consulting helps enterprise teams identify high-risk decision points, design governance frameworks, and build AI programmes that scale with confidence.

Book a free consultationWhat Is Human-in-the-Loop AI — And What It Isn't

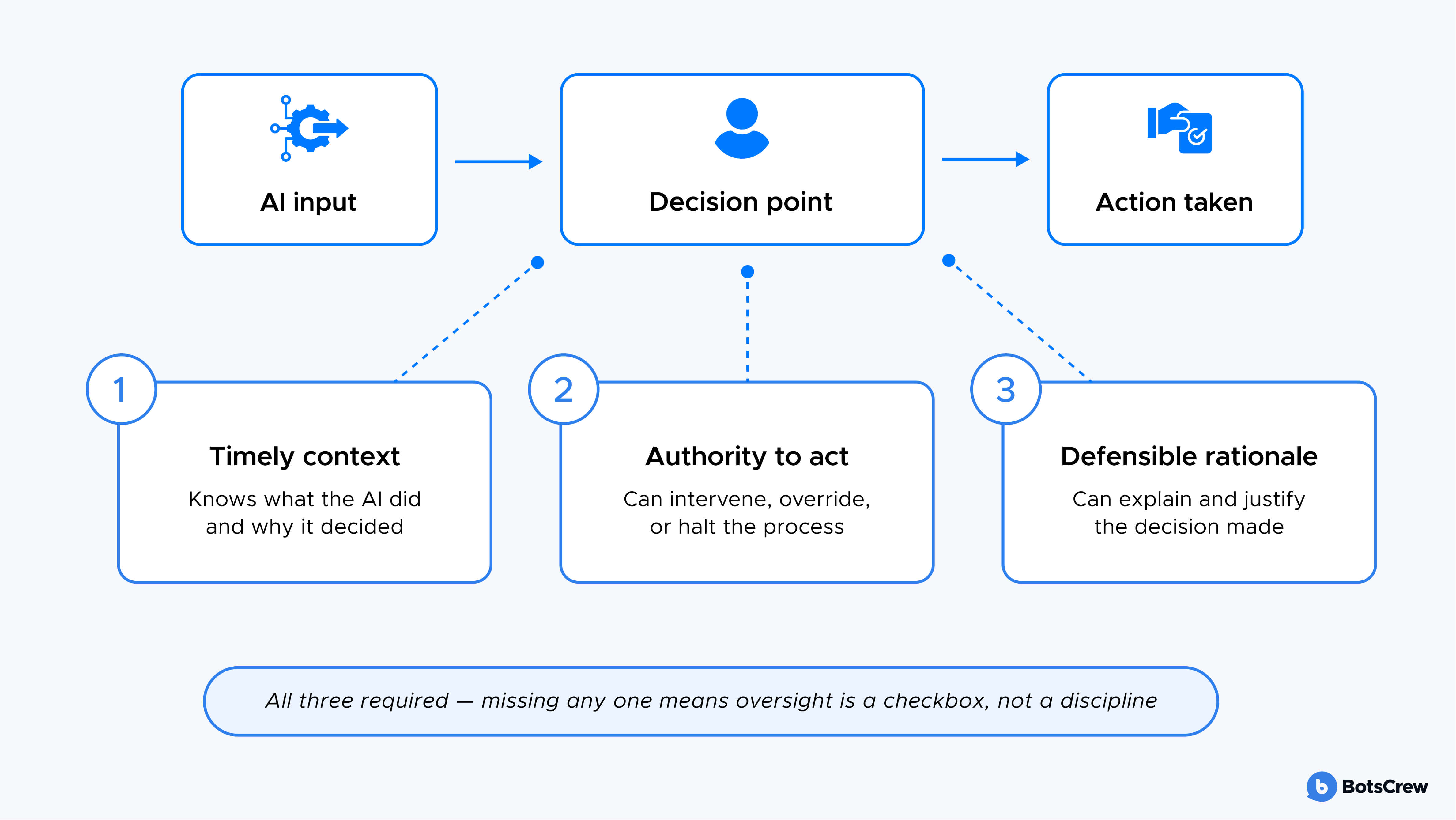

A human-in-the-loop AI workflow places a qualified person at a critical decision point within an automated process. That person has three things: timely context about what the AI has done, the authority to intervene or override, and a defensible rationale for their decision.

This needs to be defined precisely, as most organisations misunderstand it. They assign someone to "oversee" an AI system without training them on what to look for, when to escalate, or how to spot automation complacency — the tendency for people to stop closely checking outputs they’ve approved many times before.

HITL vs. HOTL: Choosing the Right Model

The distinction between human-in-the-loop (HITL) and human-on-the-loop (HOTL) matters for enterprise planning:

Human-in-the-loop means a human must approve or validate a specific action before the AI can proceed. This model suits high-risk decisions — loan approvals, medical diagnoses, legal recommendations, where a single error carries significant financial, legal, or safety consequences.

Human-on-the-loop means the AI operates autonomously while a human monitors the process and retains the ability to intervene when necessary. Think of it as the autopilot model: the system flies, but the pilot watches the instruments and can take control at any point. This suits medium-risk, high-volume workflows like content moderation or fraud detection where speed matters and risk is manageable per-transaction.

The strategic question isn't "HITL or HOTL?" It's: Where in this specific workflow does human judgment need to be guaranteed, not just available?

Most mature enterprises operate both models simultaneously, calibrated to the risk profile of each process.

Human-in-the-Loop AI Examples Across Industries

The concept becomes concrete when you see it applied to real operational challenges.

Healthcare: Clinical Validation and Prior Authorisation

Healthcare is arguably the highest-stakes testing ground for human-in-the-loop AI. AI models now analyse patient data and flag early signs of conditions ranging from cancer to cardiovascular disease — but clinical validation still requires a physician's review. In medical imaging, for instance, radiologists review AI-highlighted areas in scans to confirm accuracy, add clinical context, and ensure diagnostic explanations are medically sound.

On the administrative side, health systems are using HITL models to transform prior authorisation — a process that consumed nearly 50 million Medicare Advantage determinations in 2023 alone. AI handles data extraction, form pre-filling, and initial eligibility checks, while trained staff review edge cases affecting patient access to care. One health system reported a 91% success rate on AI-assisted prior authorisation submissions, with 15 minutes saved per successful case.

The pattern here is instructive: AI handles volume and speed; humans handle judgment and exceptions.

Financial Services: Credit Decisions and Fraud Detection

In finance, human-in-the-loop isn't just good practice — it's a legal requirement in many jurisdictions. The Equal Credit Opportunity Act, for example, requires lenders to provide clear, specific reasons for credit denials. Many high-performing AI models can't meet this standard on their own because they function as black boxes.

The solution gaining traction is a hybrid model: AI processes applications at scale and flags decisions that exceed defined risk thresholds, then human analysts review those flagged cases, produce natural-language explanations, and ensure regulatory defensibility. This preserves automation efficiency while restoring accountability. Similar dynamics are emerging in fraud detection, where sophisticated schemes often require the contextual reasoning that algorithms alone can't provide.

Customer Service: Confidence-Based Routing

Enterprise contact centres offer one of the clearest demonstrations of how human-in-the-loop AI workflows operate at scale. AI handles routine enquiries — order tracking, password resets, account balance checks — while routing complex or ambiguous interactions to human agents based on confidence scoring.

The design principle is straightforward: when the AI's confidence in its response drops below a defined threshold, the interaction escalates. But companies must be mindful of not losing the conversation thread when a customer moves from bot to human. As Stacy Sherman, Founder of Doing CX Right, said “Someone calls customer service and gets a chatbot that takes information from them. Once they get to a human, too often, they’re asked the same questions. There’s a lack of alignment and consistency between the teams that handle the AI responses and the ones that handle the human ones. This causes friction with customers.”

How to Design Effective Human-in-the-Loop AI Workflows

Knowing where to insert human oversight is the strategic decision. Designing it to actually work is the operational one. Five principles separate effective HITL implementations from the ones that create false confidence.

Map Decision Points to Risk Profiles

Not every AI output needs a human review. Apply oversight where the consequences of error are highest — regulatory exposure, customer harm, financial loss, reputational damage. A simple information-retrieval AI may need minimal supervision, while an AI making credit determinations or clinical recommendations requires embedded review gates.

Train the Humans, Not Just the Model

A recurring failure pattern is organisations that invest heavily in model development but treat human oversight as a staffing exercise. Effective HITL requires trained reviewers who understand the system's capabilities and limitations, know what good and bad outputs look like, and have practiced decision-making under realistic conditions. If your oversight process only exists in a diagram, you don't have oversight — you have a manual.

Design Against Automation Complacency

When humans review AI outputs that are correct 95% of the time, they learn to approve without scrutinising. This is automation complacency, and it's the quiet killer of HITL effectiveness. Counter it with structured review protocols: require reviewers to articulate why they're approving, rotate review responsibilities, and introduce deliberate "challenge" scenarios to keep attention calibrated.

Build Feedback Loops That Improve the System

Human oversight shouldn't just catch errors — it should make the AI better. Every human correction, override, or escalation is training data. Design your workflow so that human judgments feed back into model refinement, creating a continuous improvement cycle that makes the system more accurate and the human's job more focused on genuine edge cases over time.

Measure Oversight Effectiveness

Track the metrics that reveal whether your HITL process is working: escalation rate (how often AI routes to humans), context retention during handoff, resolution time after escalation, and the satisfaction delta between AI-resolved and human-resolved interactions. A rising escalation rate may indicate the AI needs retraining. A shrinking one could mean the feedback loops are working — or that complacency is setting in. Context is everything.

Moving From Framework to Practice

The enterprises that will lead in the next phase of AI adoption are not the ones deploying the most models. They're the ones building the governance structures that allow those models to operate with trust — from regulators, customers, and their own leadership teams.

Human-in-the-loop AI is how that trust gets operationalised. Not as a constraint on automation, but as the condition that makes meaningful automation possible.

If your organisation is beginning to evaluate where human oversight belongs in your AI strategy, the right next step is an honest assessment of your current workflows: Where are the high-risk decision points? Who is reviewing AI outputs today — and are they equipped to do it well? The answers often reveal gaps that are straightforward to close, once they're visible.

BotsCrew works with enterprise teams to design AI governance frameworks that balance automation speed with the oversight your regulators, customers, and leadership teams expect. If you're ready to move from theory to practice, book a free AI strategy consultation to map your next steps.

Not sure where human oversight fits in your AI strategy?

BotsCrew's AI Strategy Consulting helps enterprise teams identify high-risk decision points, design governance frameworks, and build AI programmes that scale with confidence

Book a free consultation