From Claude AI Prototype to Production: The Hidden Gap

If you’ve built a working Claude prototype and everything started breaking the moment you tried to improve it, you’re not alone. This article explains why that happens—and what it actually takes to move from prototype to a production-ready AI system.

TL;DR

💡Most non-engineers who build a working Claude prototype hit the same wall at roughly the same point. The codebase outgrows what any prompt can hold in context, integrations get flaky, users hit bugs you can't reproduce, and changes start breaking things that used to work. The wall is structural, not personal — and the fix isn't to rewrite or to learn engineering on a live system. It's to preserve the prompts, workflows, and product intelligence that already work, and rebuild the scaffolding underneath: authentication, observability, integrations, error handling, deployment.

You were adding one small feature. You've been at it for three days.

Every time Claude fixes the thing you asked about, something else drifts out of place — the login behaves strangely, a calculation that was right last week is wrong now, a button that used to work doesn't. You fix that, and something further downstream breaks. You're farther from a working system than you were when you started, the codebase is starting to feel like a place you don't fully recognize anymore, and a small voice is asking whether you actually know what you're doing.

If that's roughly where you are — or somewhere in the same neighbourhood — this piece is for you.

What you're hitting is structural, not personal: the wall almost every non-engineer AI builder reaches when a vibe-coded prototype outgrows the tool pattern that built it.

The person you are — someone building real tools without being a traditional software engineer — barely existed as a category two years ago. The people shipping internal apps, customer-facing products, and AI assistants that actually did work were either engineers or were paying engineers. The idea that a founder, an operator, or a head of department could produce a working AI product in a weekend without writing a line of code themselves would have sounded implausible. Then Claude, Claude Code, Cursor, Lovable, v0, and the rest collapsed the time from idea to working prototype from months to afternoons, and a generation of non-engineers discovered they could build. Gartner now projects that 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% at the start of the year — and a meaningful share of that growth is driven by non-engineers.

The results are frequently excellent. Whole categories of internal tooling are now being produced by the people who actually use them, which is a genuine shift in how software gets made. But the second leg of the journey — from I built it to it runs reliably, for everyone, in production — isn't well-marked yet. There's no standard playbook, no obvious moment to stop and regroup, and no widely-understood vocabulary for what's actually involved. Most people who walk this leg hit a wall in roughly the same place, for the same structural reasons.

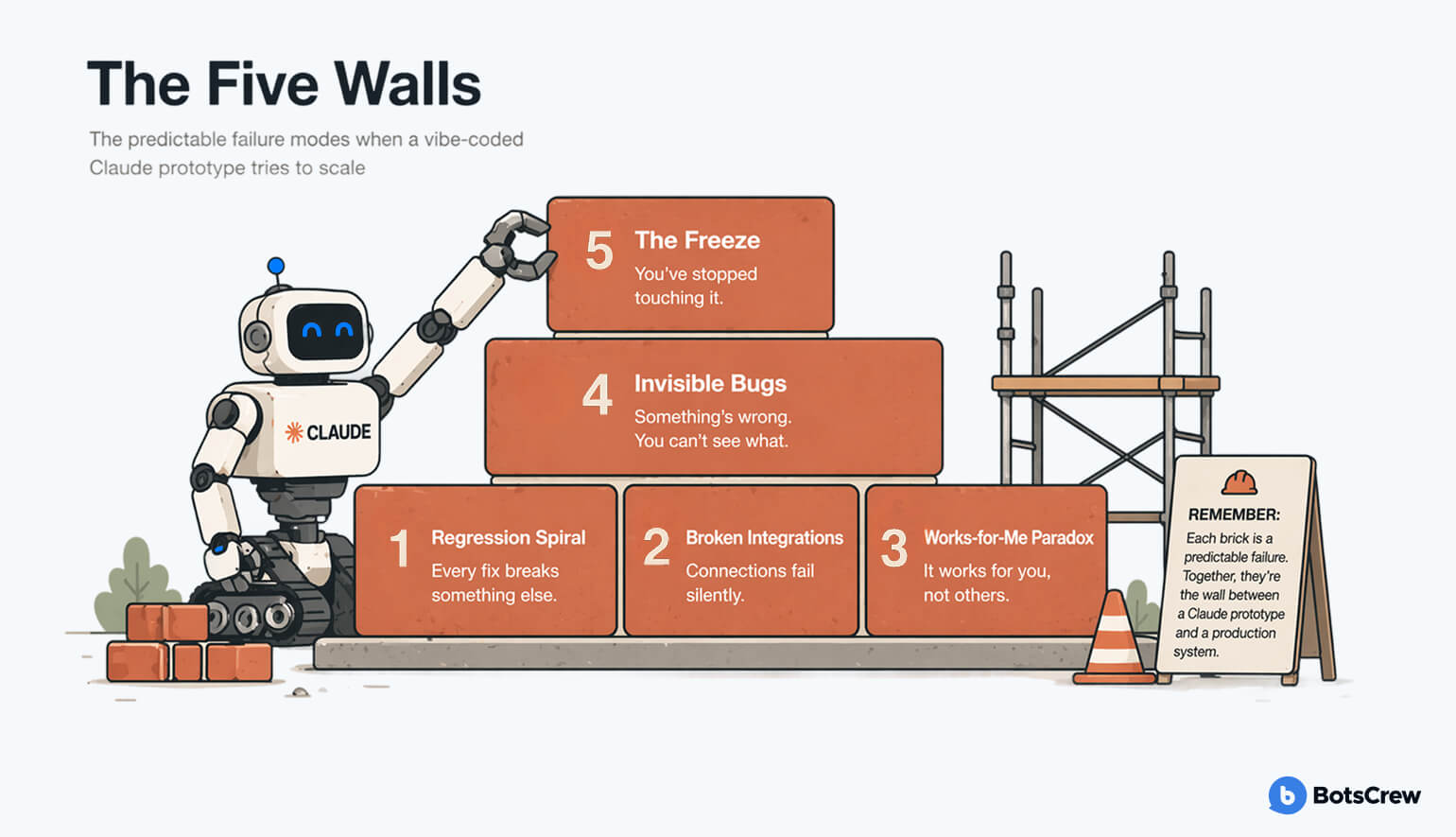

The Five Walls of Vibe-Coded AI Prototypes

Wall 1 — The regression spiral

You can't add new features without old ones breaking. You ask Claude for a change, you get the change, and two other things that used to work are now quietly wrong. You patch them; something else breaks. You've stopped shipping forward and started running in place. The codebase has grown past the point where Claude can hold its whole shape in context, and every edit is happening without a clear picture of what it might disturb. You don't know how to get back to solid ground.

Wall 2 — Integrations that don't work, or work in ways you can't trust

Claude needs to talk to the systems where the real work lives — your CRM, your documents, your ticketing queue, your calendar. One of two things has happened. Either you've spent days fighting authentication errors, OAuth flows that loop back on themselves, and MCP configurations that almost work, and you've started to suspect the whole stack assumes a layer of knowledge you don't have. Or you got the connection running and it's subtly wrong — partial data, silent failures on certain records, responses Claude then misinterprets. Either way, you can't tell whether the problem is in the integration, in the model, or in your prompt, and you can't fix it without understanding all three.

Wall 3 — It works for you, but not for everyone else

The demo was great. You rolled it out to three colleagues. One of them says "it doesn't work for me," and when you try it, it works perfectly. You can't reproduce their problem. You don't have logs that would show what they actually did. You're asking them to send screenshots and describe the steps, and nothing lines up. You don't even know where to start looking, because the system doesn't really tell you what's happening inside it. Or a common problem we saw even before people get to that point: they've built something that works for them locally, but they can't share it with their team easily because it lives as an HTML file on their laptop. So we built a simple Claude skill that solves exactly that — sharable.link. You say /share in Claude and get a clean public URL anyone can open in a browser. It's free, built by BotsCrew, and covers the "how do I even get this in front of other people" step before the harder scaling questions kick in.

Wall 4 — Something is wrong and you can't tell what

A user flagged an odd output. Or the numbers your tool produces don't quite match the numbers someone pulled manually. Or things feel slower than they used to. You're not sure if it's a bug, a one-off, or a symptom of something bigger. Every attempt to investigate is guesswork because there's no reliable way to see what the system is doing when you're not watching. You're debugging by vibes, which is what got the thing built in the first place, and it has stopped being enough.

Wall 5 — You're scared to touch it

The app works — mostly. You know there are gaps. But the last few changes were so bruising that you've quietly stopped making them. You're not shipping anymore. You're maintaining. The prototype has gone from a fast-moving experiment to a fragile artefact you tiptoe around, and you can feel it aging while you decide what to do.

If one of those landed, read on. If several did, definitely read on.

If you recognized yourself in more than one of these, you don’t need more guessing — you need visibility.

👉 See what’s actually going on under the hood: get a structured breakdown of your prototype’s weak points before you try to fix them.

Book a free consultationWhy Does the Wall Show Up?

The wall has a predictable shape. It shows up at roughly the same point for almost everyone who builds this way, and it shows up for specific structural reasons worth understanding.

Vibe coding with Claude works brilliantly up to a point. It works because Claude is great at generating code for problems it can see in full — a single feature, a self-contained script, a small app with a handful of moving parts. You describe what you want, Claude writes it, you iterate until it runs. The feedback loop is so fast that it feels like building should always feel this way.

Then the system gets bigger. The codebase accumulates components, data, users, integrations, edge cases — a dozen invisible things Claude can't see all at once. A change you request no longer happens in a vacuum; it lands in a context the model doesn't fully have access to. Locally, the code Claude writes is still correct. Globally, it starts stepping on things it couldn't know about. You experience that as "Claude keeps breaking stuff." What's actually happening is that the coordination problem has grown past what the tool pattern can hold.

Professional engineering teams deal with this in specific ways. They write tests so they immediately know when a change breaks something. They keep architectural documentation so new work has context. They use version control deliberately, so they can get back to a known-good state in thirty seconds. They instrument their code so when something goes wrong at 2am, there's a record of what happened. These practices aren't glamorous. They exist precisely to compensate for the fact that nobody — no human, no model — can hold a full production system in their head all at once.

A vibe-coded prototype usually has none of these practices, because you didn't need them in phase one. The absence is fine until suddenly it isn't. The wall is the point where their absence starts costing you more time than it ever saved.

Want a clearer picture of how production systems avoid this entirely?

👉 We can walk you through what’s missing in your current setup — and what matters vs. what doesn’t.

What Does a Production-Ready AI Prototype Actually Need?

A Claude prototype and a production-ready system answer two different questions. The prototype answers is this idea any good? The production system answers can this idea run reliably, at scale, without me in the room? That second question is a different discipline. Not a harder one, not a more important one, but a different one, with different tools, different habits, and different instincts.

The reason "just prompt Claude harder" stops working is not that Claude has gotten worse at coding. It's that the second question is less about code generation and more about system design — knowing what to build around the code. Authentication, observability, error handling, state management, integrations with other systems, deployment pipelines, cost controls — these are the infrastructure layers that make a prototype survivable outside your laptop. They aren't usually the kind of thing you can prompt your way to, because you have to know they exist to ask for them, and you have to know what good looks like to judge whether the answer is right.

None of this means the work you've done gets thrown away. The opposite. The prompts you iterated on, the workflows you refined, the user experience you tuned, the feedback you've gathered — these are the high-value parts of what you built, and they stay. What typically gets rebuilt is the scaffolding underneath: the invisible parts you didn't realize you needed because your prototype was never pushed hard enough to require them.

A good hardening project preserves the shape of your idea and installs the foundation it deserves. It usually takes weeks, not quarters. What it absolutely should not be is a rewrite, because a rewrite loses the hard-won product intelligence baked into the prototype you already have.

What Should You Do Next?

A few concrete moves, in the order most people actually need them.

Don't try to learn engineering on a live system. The moment you have users depending on this, the prototype is no longer a learning environment. Every mistake you make while learning has real consequences — for users, for data, sometimes for the business. The compound interest on "I'll figure it out" gets expensive fast.

Don't rewrite from scratch. This is the most common overreaction. Someone looks at a fragile vibe-coded prototype and says "we need to rebuild this properly." Then six months disappear into a rebuild that usually ends up worse than the original, because the rebuilders didn't inherit the thousand small decisions the original builder made that actually made the thing useful. The prompts, the flow, the edge cases you handled because a user complained — that's the product. The code is the delivery mechanism. Replace the delivery mechanism; keep the product.

Get outside eyes on what you have. Before doing anything irreversible, get someone who has shipped production AI before to actually look at what you've built and tell you the truth. Not to criticize. To triage. What's solid, what's fragile, what's actively unsafe, what's worth rebuilding, what's worth leaving alone. An honest triage turns an overwhelming mess into three or four concrete projects, most of which are much smaller than they look from the inside.

Get a concrete read on your own gaps. If you want to do a first pass yourself, BotsCrew's vibe-coded prototype hardening checklist walks through fourteen diagnostic questions you can answer yes or no. It won't fix anything, but it will turn vague anxiety into a specific list of what's actually missing — and that list is what anyone helping you will want to see first.

Bring in help that's done this before. This is the move most people delay longest, usually because they feel like they should be able to figure it out themselves. Production AI engineering is a specialization. The people who have done this many times can see in an afternoon what would take you months to discover — and, more importantly, they know what not to touch.

The Honest Close

The wall is real, and it's the part of the journey nobody warned you about. You built something that worked. That's the hard part. The transition from "it works" to "it works at scale, for everyone, without me watching it" is a different kind of work, and the people who navigate it well are usually the ones who stop trying to do it alone the moment they recognize what it actually is.

BotsCrew works specifically with teams in the exact position you might be in right now — with a Claude prototype that proved the idea, hit the wall, and needs to become something the business can depend on. The goal is never to throw away what you've built. It's to put the right foundation underneath it, so the thing you made can actually do what you already know it can do.

If any of this sounds like where you are, a short conversation is usually enough to know whether help makes sense. You don't have to keep running in place.

Common Questions

Should I rewrite my Claude prototype from scratch? Usually no. Rewrites lose the prompts, workflows, and edge-case handling that made the prototype useful in the first place. A good hardening project preserves those and rebuilds the scaffolding underneath.

How long does a hardening project take? Typically weeks, not quarters, when done by an experienced team. Scope depends on which of the Five Walls you've hit and how many.

Can I learn engineering and fix it myself? You can, but not on a live system with real users. The compound cost of learning on production — in data, trust, and rework — almost always exceeds the cost of bringing in someone who has done it before.

What's the difference between a Claude prototype and a production system? A prototype proves the idea is worth building. A production system proves the idea can run reliably, at scale, without its creator in the room. Different disciplines, different skills.

If you're somewhere in this process, you don't have to figure it out alone.

Book a short working session with our team — we'll map what you've built, identify what's solid, and show you the fastest path to production without losing what already works.

Book a free consultation